Deepfake Scam: Types, Risks & Real-Life Examples - Yono

Deepfake Scam: Types, Risks & Real-Life Examples

09 Mar, 2026

cybersecurity

With the rapid growth of Artificial Intelligence (AI), cyber fraud is also becoming more advanced. One of the latest threats is the deepfake scam, where criminals use AI to create fake videos, voices or images that look real. These scams are increasingly being used in banking fraud, identity theft and financial scams.

Today deepfake scams are not limited to celebrities or politics. They are now being used in AI fraud banking, customer impersonation and digital payment fraud. Understanding what are deepfake scams, how they work and how to stay safe is important for everyone using digital services.

What Is a Deepfake Scam?

A deepfake scam is a fraud mechanism where criminals use AI deepfake technology to create fake audio, video or images of real people. These are then used to trick victims into sharing money, passwords or personal details.

AI deepfake technology can:

-

Copy someone’s voice

-

Create fake AI videos

-

Generate fake AI images

This makes deepfake scams more dangerous than traditional online scams.

How Do Deepfake Scams Work?

Most AI deepfake scams follow a structured process:

1.Data Collection: Fraudsters collect photos, videos or voice samples from social media or public sources.

2. Deepfake Creation: Using deepfake AI tools, they create fake voice recordings, AI videos or AI images.

3.Impersonation: They pretend to be someone trusted like a bank official or senior employee.

4.Victim Contact: They contact victims through calls, video calls or messages.

5.Fraud Execution: Victims are asked to transfer money or share confidential information. This method is becoming common in deepfake financial scams and deepfake identity fraud cases.

Types of Deepfake Scams

Deepfake Video Scam: A deepfake video scam involves fake video calls where criminals impersonate bank officials, company executives or family members.

Deepfake Identity Fraud: This involves creating fake digital identities using AI images and videos for account access or fake verification processes.

Deepfake Financial Scams: Fraudsters use fake videos of financial experts or public figures to promote fake investments or trading schemes.

How to Protect Yourself from Deepfake Scams

Protection against AI deepfake fraud requires vigilance and practical security measures.

Verify Through Multiple Channels - If you receive an unusual request via video or voice call, verify it through a different communication method. Call the person back on a known number or send a text asking for confirmation.

Look for Warning Signs-Watch for unnatural facial movements, lighting inconsistencies, lip-sync errors in deepfake video scam content, or unusual background noise in deepfake voice scam calls. Deepfakes often struggle with rapid head movements or side profiles.

Limit Social Media Sharing-Reduce publicly available photos, videos, and voice recordings that scammers can use to create deepfake ai image and video content. Review privacy settings regularly.

Establish Code Words-Create secret verification phrases with family members and colleagues for emergency situations. This simple step can prevent deepfake scam success when someone claims to need urgent help.

Question Urgency-Scammers create artificial time pressure to prevent careful thinking. Legitimate emergencies allow time for verification. Always pause and verify before acting on urgent requests.

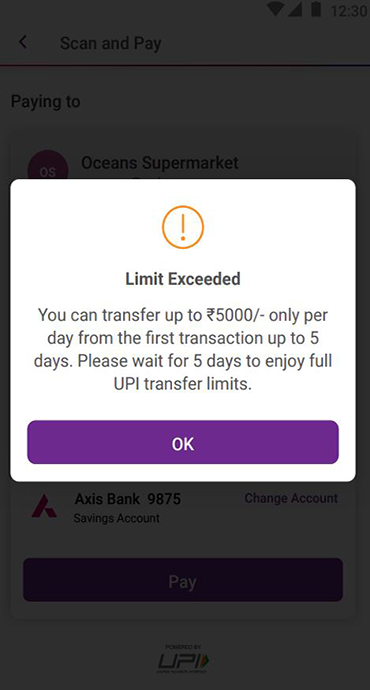

Use Multi-Factor Authentication-Enable additional security layers for banking and financial accounts. Even if scammers obtain passwords through deepfake identity fraud, they cannot access accounts without secondary verification.

Stay Informed-Understanding what is deepfake scam and staying updated on new techniques helps you recognize threats. Share knowledge with family and colleagues to create collective awareness.

Real-Life Examples of Deepfake Scams

1. Arup Engineering Firm Incident: In early 2024, an employee at Arup, a global engineering firm, was deceived into transferring approximately $25.6 million to fraudsters. The scammers used deepfake technology to impersonate the company's CFO and other executives during a video conference, convincing the employee that the fund transfer was legitimate.

2. Hong Kong Multinational Firm Case: In 2024 a clerk at a multinational company in Hong Kong was tricked into transferring HK$200 million (approximately £20 million) after participating in a video conference where fraudsters used deepfake technology to impersonate senior executives. The realistic nature of the deepfake led the employee to believe the transaction was genuine.

3. UK Energy Company Voice Scam: In 2019, the CEO of a UK-based energy firm received a phone call from someone who sounded exactly like the CEO of the firm's German parent company. The caller requested an urgent transfer of €220,000 to a Hungarian supplier. Believing the request to be authentic, the CEO authorized the transfer, only to later discover it was a deepfake voice scam.

4. Singapore Finance Director Incident: In 2025, a finance director at a multinational firm in Singapore authorized a payment of US$4,99,000 after attending a Zoom call with individuals he believed were senior executives. The entire meeting was a deepfake, with AI-generated visuals and voices used to impersonate the company's leadership.

Risks of Deepfake Scams

Financial Loss - Victims may lose money through unauthorized transfers or fake investment scams.

Identity Theft - Personal data can be misused for future fraud or account takeover.

Banking Fraud Risk - Deepfake scams are now being used in AI fraud in banking, making fraud detection in banking more complex.

Reputation Damage - Businesses and individuals can face trust and credibility loss.

Emotional Stress - Victims often experience fear, panic, and stress after fraud incidents.

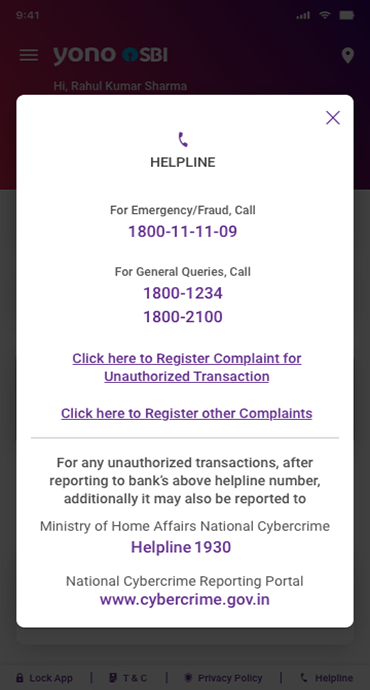

What to Do If You Are a Victim of a Deepfake Scam

If you suspect a scam:

-

Immediately inform your bank

-

Block your cards or accounts if required

-

Report to the cybercrime reporting portal

-

Change passwords and secure accounts

-

Save evidence like call logs or messages

Quick action can reduce financial damage.

How Organizations and Banks Are Fighting Deepfake Fraud

Banks and financial institutions are using advanced fraud detection in banking systems to detect suspicious activity.

Common measures include:

-

AI-based fraud monitoring systems

-

Multi-factor authentication

-

Customer awareness campaigns

-

Transaction pattern monitoring

These steps help reduce banking fraud risks from deepfake scams.

Conclusion

Deepfake scams are an emerging cyber threat powered by artificial intelligence. From deepfake voice scams to deepfake video scams, criminals are using advanced technology to commit fraud.

Understanding what is deepfake scam, how it works, and how to identify warning signs can help protect your personal and financial information. As AI fraud in banking continues to grow, staying informed and alert is essential.

Always verify unusual requests, use secure digital banking channels and report suspicious activity immediately.